In this article

Your Guide to Updating Your Tracking Plan (Without Breaking Everything)

We talk a lot about how to create a great tracking plan. But what happens when you need to update an existing plan after your product launches? Here are some tips for revising your tracking plan to capture all the data you didn't know you needed (without breaking anything).

Your product is out. Your tracking plan is in place. Now the work really begins, at least as far as your tracking plan is concerned.

But just because a tracking plan was great pre-launch doesn't guarantee it'll stay that way on its own. Even the best and most thoughtfully laid-out courses need correction from time to time. Your tracking plan is no different.

No matter how well you design your tracking plan pre-release, chances are, new questions about your users and product will arise after things go live. Here's how to adapt your tracking plan without breaking your analytics—or undoing your sanity—in the process.

Look at your data (nearly) every day

The first step in adjusting your tracking plan is knowing whether you need to in the first place. Look at your data every day for the first few weeks that your product is live. Be sensitive to any technical flaws. Is your analytics platform accurately collecting info? Is the data you're gathering answering the questions you set out to find answers to during that initial purpose meeting? You don’t have any followup questions from the ones you have already answered?

By looking at your data daily, you may find that the answer to all those questions is: "Well, yes!" In such an instance: congratulations! You've built the perfect tracking plan, and you are in no need of the remainder of this article. Have a cookie. 🍪

More likely, though, you'll walk away from checking your data with even more questions about your users and how they're leveraging your product. In which case, there's work to do. It's OK, it happens to the best of data teams (even ours).

After one of our launches, we realized our tracking plan was designed such that we had to dig into our raw data to know how long branches had been open.. We revised our tracking plan and added a new property to allow us to measure the number of hours and days that branches had been open.

For the first few weeks after your launch, look at your data daily. Then, once you’ve built a good baseline of metric performance you can shift to a weekly data review cadence to review your short-term KPIs. Once upon a time, it was acceptable to consult data monthly, quarterly, even yearly. Now, as we're deep in COVID-19 country and the new, fast-paced normal that came with it, markets and customer needs shift on a dime, so frequent consultation of data is advisable.

For the first few weeks after your launch, look at your data daily.

Speaking at Amplitude's recent Amplify conference, executives from elite companies unanimously agreed about a need to be more agile and responsive with data. Shipt's VP of data science, Vinay Bhat, suggested that looking at data every day and "using shorter-term trends to quickly iterate off of" allowed Shipt to be more diligent about responding to new customer trends.

Along similar lines, Shopify's director of data science, Philip Rossi, explained that daily data checks gave his team the ability to show people a snapshot of their performance at any given time.

“On any given day . . . we could give people the [data] insights we had at that particular time, knowing we'd be looking for new ones the next day,” he said.

Whatever the nature of your business, those are good precedents to follow.

Listen to your product manager's suggestions

Your product manager is the one closest to the KPIs and goals for your product. They may or may not be data-savvy, but that doesn't necessarily matter. The questions they have post-release can help improve your tracking plan and bring it more in line with the business's goals. They'll also be getting feedback from other team members about potential areas of improvement in your tracking plan and new questions to be answered.

For instance, say you wanted to figure out where in the user journey your users were dropping off. You start out by measuring, with your tracking plan, an event for overall adoption rates. A few days after your launch, one of your product managers comes to you and suggests that you make a change to help your designers better understand which features need improving (rather than just measuring adoption generally). After collaborating with your product manager, you decide to track adoption-rate data per feature that lets your design team see where they can make improvements to individual features to remove friction from your UX.

Regularly check in with your product manager after their first round of revisions to ensure that your data feeds into the future of the product. If they’re missing a particular kind of needed data — “Why aren't we getting info about follow-through from feature-set a to feature-set b?” — you can make that integration or add a new event to capture that information. Alternatively, if there's a metric they no longer need — “We're a month in; we don't need to still be checking how many users are coming in from our launch-day content” — you can streamline your plan.

Run any changes by your developers and data experts

All potential adjustments to your tracking plan should be gut-checked by your developers and data experts. This ensures the tracking plan is designed well and that it's possible to track the data being requested.

For instance, let's say you have two stakeholder requests: one from your product manager and one from your sales department. Your product manager wants to add information to your tracking plan about how many users are going from your “Start trial” page, through your “Demo,” to your “Premium Landing Page.” Your sales department wants to know how many users use your product after visiting a particular other site.

Discipline and care make your tracking plan work.

Once you know the data they want tracked, check with your developers that it's possible to collect that data. It's likely that your developers could very easily implement your product manager's request, given the right analytics tool. However, your sales department's request may be more difficult. That information may not be available at the moment when the event should be sent or be better served by a qualitative approach to collecting it.

Finally, once you've settled on what can and will be added to your tracking plan, have your data experts come in. Their job will be to review the new structure to ensure that it meets your data governance standards in security, cleanliness of data, and usability.

Refer back to your established naming conventions

When updating your tracking plan, refer back to your original tracking document and established naming conventions to avoid duplication errors and casing inconsistencies. You created your initial tracking plan for a reason, and that reason was ensuring data continuity post release. Tracking plans are useful only if the data they include is usable. The organization and communication of those insights are imperative.

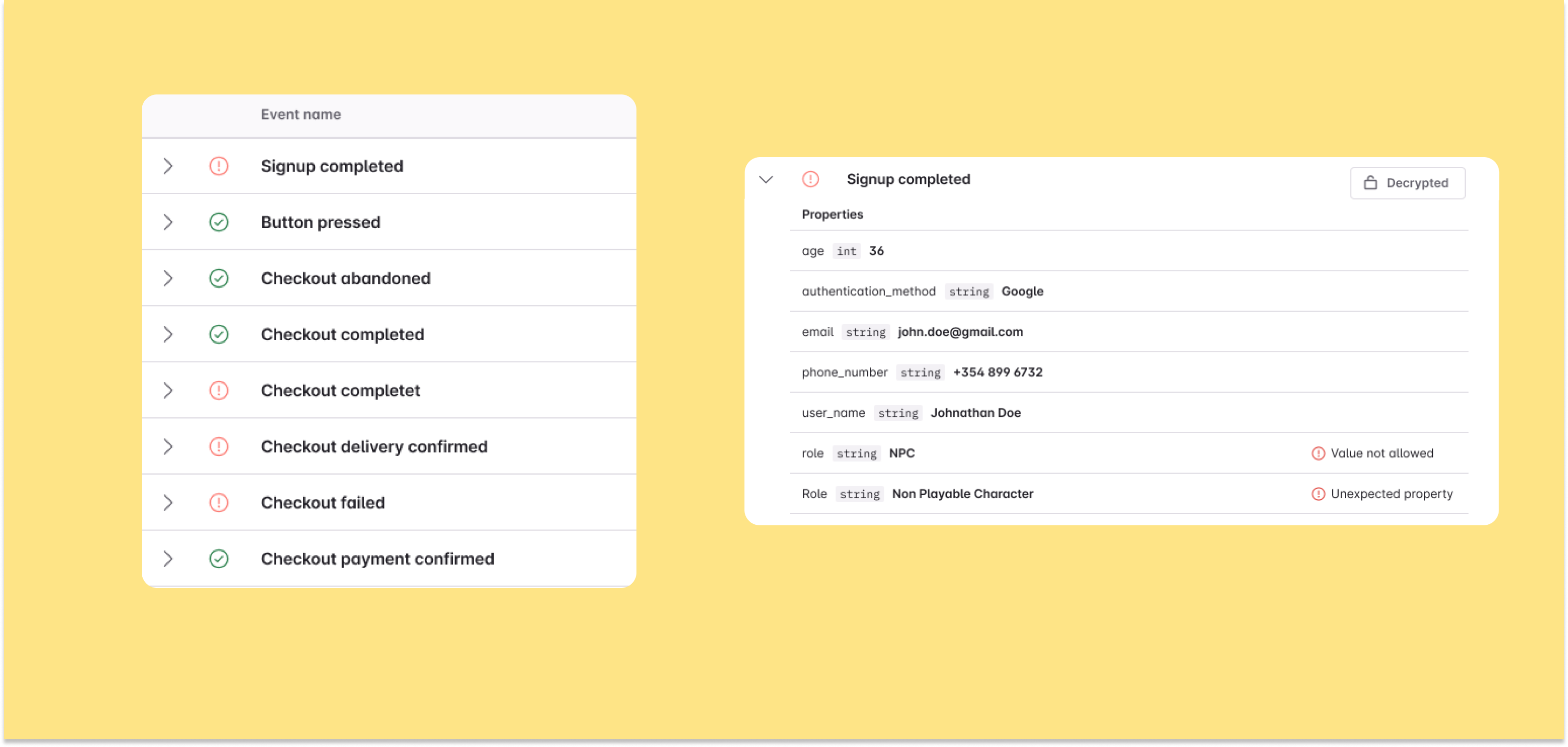

Naming conventions are crucial for maintaining that continuity. However, enforcing consistent naming conventions is one of the biggest challenges for companies because errors are often small and seemingly innocuous. But all it takes is for one stakeholder to refer to “customers” as “clients” during input for that potentially vital data to become siloed and inaccessible in an extensive database. For that reason, the most powerful tool to maintain data continuity is a naming convention that's well defined and available to everyone to prevent these problems.

Enforcing consistent naming conventions is one of the biggest challenges for companies.

Developers (and anyone creating new events and properties in tools like Avo) should pay particular attention to the casing of names, order of objects and actions (e.g. Button Clicked vs Clicked Button), and the tense to avoid creating messy duplicates or confusing names. A good example of a naming convention will have the following features:

- A settled practice for cases (e.g., everything in “UPPERCASE,” everything in “lowercase,” everything in “camelCase”)

- A unified approach to articulating spaces between separate words (e.g., use of underscores, use of spaces, use of hyphens/em dashes, use of camelCase)

- IDs that include the data type expected for each variable

- Human-readable data that clearly and concisely communicates what each event and property measures (e.g., Adoption Rate, Conversion Rate, etc.)

Remember, duplicates or erroneous values can lead to adverse consequences for people using your data.

Your tracking plan is a little like a language of its own. Consistency and continuity are vital to maintaining ease of understanding between your stakeholders. Discipline and care make it all work.

Establish a system for version control

Without an established system for version control, tracking plan management can get messy, and updates between different versions, made by different teams, can cause conflicts within your code and schema. Having an established VCS (version control system) and process helps you avoid these disconnects.

This is particularly important in the context of team collaboration. The point of your tracking plan is to de-silo teams and improve knowledge-sharing. For that reason, multiple teams will be working on the same tracking plan. You may find mistakes are made, particularly in the first month or two of using your plan, when everyone is getting used to the standardized procedure.

Teams traditionally feel the pain of poor version control when they’re over-reliant on Google Sheets.

Teams traditionally feel the pain of poor version control when they’re over-reliant on Google Sheets (bad doc history features have a lot to answer for). Worse still, they might be trying to manage tracking plan versions through multiple files (e.g., Data Plan v23). What your team needs instead is a single source of truth, like Avo or a similar tool.

Beyond updating your tech stack, you can improve version control by implementing branched workflows and version control systems. This is easy if you’re keeping your tracking plan in JSON documents and collaborating in git, which allows you to bump a version every time you update your plan. This gives you the ability to check for conflicts and dependencies within your codebase, as you would during regular QA checks of code pre-deployment.

Make tracking plan management (and revising) easier with Avo

There's nothing wrong with changing your tracking plan design post release. In fact, it's a great thing to do. Acknowledging that your tracking plan is a little off somewhere—or that more data would help you build a better product--is the first step to improvement. Once you've established that you need more — or different — data than you originally planned, it's time to get to work adding new events and properties so that you can get better answers down the road.

Nevertheless, tracking plans can be demanding, even with the most disciplined stakeholders in the world. That's why it comes in handy to use a tool like Avo. With Avo, you can keep track of all your events and properties easily in one place. It helps you enforce best practices, like standardizing casing and preventing duplicate events and properties from occurring. Avo also lets you seamlessly push updates to developers for every platform from a single, accessible source of data truth.

Try Avo today for better product decision-making and a cleaner tracking plan.

Block Quote